Contenu

| ⏱ Date de fondation | 2011 |

| 🚩 Pays | France |

| 🔔 Français | Oui |

| 💶 Devises du compte | EUR, USD, CAD, XAF |

| 💲 Les systèmes de paiement | VISA, MasterCard, MyBuh, Perfect Money, MoneyGO, Bitcoin, Ethereum, USDT |

| 🎁 Bonus de premier dépôt | 800 EUR |

| 📞 Support | 24 |

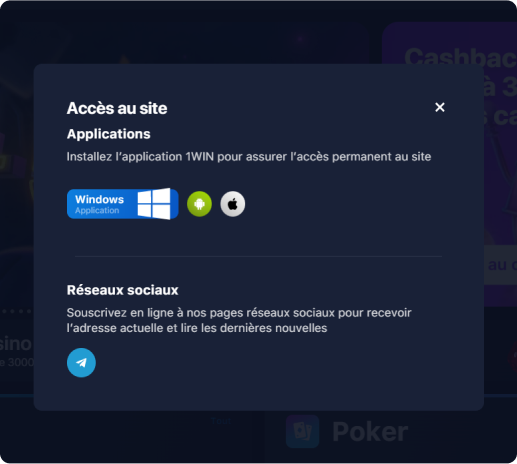

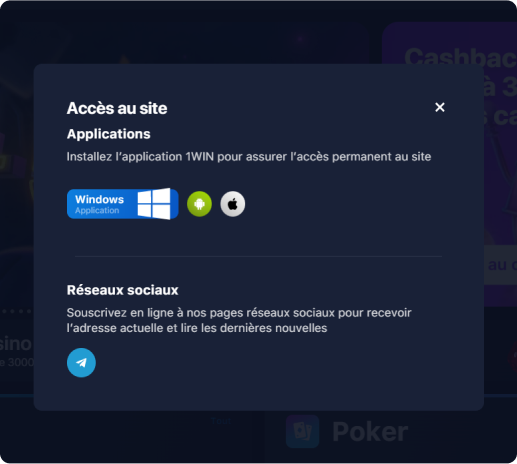

| 📱 Application mobile | Oui |

| 💰 Mise minimum | 1 EUR |

COMMENT TROUVER LE SITE OFFICIEL DE LA SOCIÉTÉ DE PARIS 1WIN ?

COMMENT TROUVER LE SITE OFFICIEL DE LA SOCIÉTÉ DE PARIS 1WIN ?

1WIN, un autre nom du site officiel de paris communs!

Elle s'exprime par des centaines de milliers de paris dans le monde entier. Il ne s'agit pas de l'excitation, mais du plaisir du jeu. Comme vous le savez, la demande alimente l'offre et c'est pourquoi nous voyons plus de 1 000 sites de paris sur le marché. Mais le miroir 1WIN s'en distingue par des cotes élevées, des limites élevées et les taux de paiement les plus rapides. Dans chaque moteur de recherche, à un seul endroit, nous pouvons toujours trouver une zone de travail de ce bookmaker. Ce n'est pas sans raison que le site officiel de 1WIN est visité chaque jour par 1 million de personnes dans le monde.

SORSS

Сode promo

COMMENT S'INSCRIRE AU MIROIR 1WIN?

COMMENT S'INSCRIRE AU MIROIR 1WIN?

Une fois que nous avons identifié un bookmaker 1WIN digne de confiance, nous devons nous inscrire. Le lien ci-dessous est un lien, allez-y, choisissez un moyen qui nous convient. Il y en a 3.

- En 1 clic*

- Réseaux sociaux

- Enregistrement complet**

* La plus facile et la plus rapide

** La méthode la plus sécurisée qui vous permet de rentrer sur le site en dépit du blocage du site officiel 1WIN.

Retenez et écrivez bien votre login et password ! Vous devez le protéger en ajoutant des chiffres et des signes aux lettres ! Le plus important point de la sécurité et de la protection de vos fonds ! Inscrivez-vous au bouton 1WIN.

POURQUOI CHOISIR CETTE SOCIÉTÉ DE PARIS SPORTIFS?

Meilleure ligne de pari

Futbol, basketbol, beyzbol, hokey, tenis, hentbol, floorball, boks, motor sporları, rugby, Amerikan futbolu, snooker, masa tenisi, kriket, dart, voleybol, futsal, badminton, plaj voleybolu ve mma gibi sporlar vardır. Bunların dışında yüzlerce şampiyonanın yer aldığı çok fazla sayıda bilgisayar spor oyunu bulunmaktadır.

Commodité des paris sportifs

La convivialité des jeux de paris sportifs est un facteur essentiel. Voilà pourquoi vous pouvez télécharger 1WIN sur les dispositifs mobiles. Les applications pour android et ios existent. Elles permettent de placer les paris sportifs à tout instant et en tout lieu.

Vous trouverez toujours sur ce site le nouveau lien vers 1WIN mirror, l'accès le plus récent.

Avantages constants

La stabilité du paiement de toutes les sommes est un autre des critères importants de sélection d'une entreprise de bookmakers. Le bookmaker 1WIN ne fait l'objet aucunes réclamations majeures, le retrait de l'argent est effectué rapidement. Il existe douze systèmes de paiement, du qiwi aux carte bancaires!

1WIN : COMMENT PARIER?

1WIN : COMMENT PARIER?

C'est très facile. Il vous suffit de vous inscrire, de remplir un compte, de sélectionner votre sport et vos équipes. Puis choisissez ce sur quoi vous voulez parier.

Chaque individu a ses propres méthodes et les développe. La plupart des personnes aiment parier sur le total en football. Un nombre particulièrement grand peut être attrapé dans le jeu (ce qui signifie que le jeu est à ce point).

Après avoir indiqué le montant que nous allons miser, cliquez sur le bouton de confirmation. Le pari est accepté, il faut maintenant attendre la fin du jeu.

Si vous remportez la mise, vous obtenez le résultat de la mise multiplié par l'indicateur. Si vous remportez la victoire, vous perdez le montant de l'indicateur. Parallèlement au site officiel de 1WIN, il est possible d'utiliser des appplications téléphoniques et des miroirs de travail.

1WIN ACCÈS CONTINU ET ÉCHELONNÉ

Il s'agit d'un bookmaker offshore, les noms de domaine ont donc été interdits au fil du temps. Ainsi, si vous souhaitez avoir accès au bookmaker de manière continue, mettez le site en signet. De cette manière, vous ne trouverez pas le miroir 1WIN tous les jours.

Vous pouvez également utiliser des VPN et d'autres outils de cryptage. Choisissez uniquement des sociétés de paris fiables avec des paiements décents et une large ligne!

1win Россия

1win Россия 1win India

1win India 1win Қазақстан

1win Қазақстан 1win O'zbekiston

1win O'zbekiston 1win Azerbaycan

1win Azerbaycan 1win Brasil

1win Brasil 1win Türkiye

1win Türkiye 1win Italy

1win Italy